nvblox : ESDF, TSDF와 이를 이용한 플래닝

- -

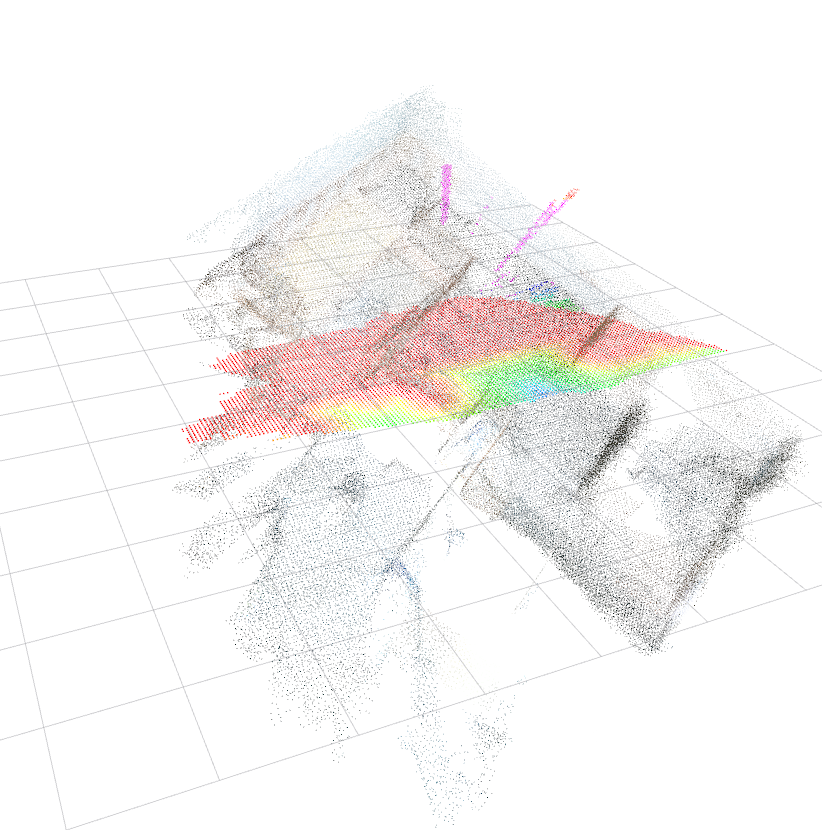

nvblox는 NVIDIA가 공개한 GPU 가속 3D 맵핑 라이브러리로, 로봇이 센서(LiDAR, depth camera 등)로부터 받은 데이터를 실시간으로 TSDF / ESDF 형태의 voxel 기반 지도로 통합하는 데 초점을 두고 있다.

고해상도 3D 환경에서는 CPU 기반 voxel 맵이 메모리와 연산의 병목을 유발하고, 실시간 플래닝을 위해서는 ESDF query가 많이 발생하게 된다. 따라서 Nvblox에서는 이를 모두 GPU에서 처리함으로써 대규모 3D 환경에서도 실시간으로 TSDF/ESDF를 업데이트하고 플래너가 바로 쓸 수 있는 distance field를 제공하는 것을 목표로 하고 있다.

ESDF

ESDF(Euclidean Signed Distance Field)는 공간의 모든 지점에서 "가장 가까운 장애물까지의 직선 거리"를 알려주는 3D 지도이다. 부호가 있어 장애물의 안/밖도 구분할 수 있다.

예를 들어 방 안에 장애물이 있다고 하면, ESDF는 방 안의 모든 점에 대해 "여기서 가장 가까운 벽까지 몇 미터?"를 미리 계산에 적어둔 표이고 벽 바깥이면 거리 값은 양수, 벽 안이면 음수, 벽 표면은 0이다.

따라서 ESDF는 장애물에 가까울 수록 비용을 높여 자연스럽게 장애물을 회피하는 경로를 출력할 수 있다.

TSDF의 경우 표면을 잘 재구성하려고 만든 거리장인데, ESDF는 로봇이 충돌 없이 움직이도록 장애물까지 최단 거리를 어디서든 바로 알게 해 주는 거리장이다.

핵심 파라미터들

- voxel_size

- truncation_distance

- 표면에서 얼마나 떨어진 곳 까지 TSDF를 의미있게 유지할 지

- max_integration_distance_m

- 이 거리 이후의 depth는 아예 무시

- esdf_max_distance_m

- 이 거리까지만 ESDF 계산

새 관측 TSDF

⬇

기존 TSDF와 가중 평균

⬇

누적된 TSDF

⬇

ESDF 계산

설치 방법

Ubuntu 22.04, 24.04만 지원하기 때문에 Ubuntu 20.04의 경우 도커로 설치를 진행해야 한다.

sed -i \

's|ARG BASE_IMAGE=nvcr.io/nvidia/cuda:12.8.0-devel-ubuntu24.04|ARG BASE_IMAGE=nvidia/cuda:12.2.0-devel-ubuntu22.04|' \

docker/Dockerfile.deps

head -n 3 docker/Dockerfile.deps

# 정상 출력

ARG BASE_IMAGE=nvidia/cuda:12.2.0-devel-ubuntu22.04

FROM ${BASE_IMAGE}

sed -i \

's|ARG BASE_IMAGE=nvcr.io/nvidia/cuda:12.8.0-devel-ubuntu24.04|ARG BASE_IMAGE=nvidia/cuda:12.2.0-devel-ubuntu22.04|' \

docker/Dockerfile.test_nvblox_torch

# 잘못 빌드될 경우

docker rm -f nvblox_deps 2>/dev/null || true

docker rmi -f nvblox_deps 2>/dev/null || true

docker image prune -f

./docker/run_docker.sh

# 한번에

cd ~/ziwon/popular_repos/nvblox && \

sed -i 's|ARG BASE_IMAGE=nvcr.io/nvidia/cuda:12.8.0-devel-ubuntu24.04|ARG BASE_IMAGE=nvidia/cuda:12.2.0-devel-ubuntu22.04|' docker/Dockerfile.deps && \

sed -i 's|ARG BASE_IMAGE=nvcr.io/nvidia/cuda:12.8.0-devel-ubuntu24.04|ARG BASE_IMAGE=nvidia/cuda:12.2.0-devel-ubuntu22.04|' docker/Dockerfile.test_nvblox_torch && \

docker rm -f nvblox_deps 2>/dev/null || true && \

docker rmi -f nvblox_deps 2>/dev/null || true && \

docker image prune -f && \

./docker/run_docker.sh(venv) ubuntuurl@ubuntuurl-DX5-Black-Intel:/workspaces/nvblox$ python3 -c "import torch; print('torch', torch.__version__, 'cuda', torch.version.cuda, 'available', torch.cuda.is_available())"

python3 -c "import nvblox_torch; print('nvblox_torch import OK')"

/opt/venv/lib/python3.10/site-packages/torch/_subclasses/functional_tensor.py:258: UserWarning: Failed to initialize NumPy: No module named 'numpy' (Triggered internally at ../torch/csrc/utils/tensor_numpy.cpp:84.)

cpu = _conversion_method_template(device=torch.device("cpu"))

torch 2.4.0+cu121 cuda 12.1 available True

nvblox_torch import OKcd /workspaces/nvblox

rm -rf build

mkdir build && cd build

# ccache 래핑된 컴파일러/ nvcc 우회

export CCACHE_DISABLE=1

CC=/usr/bin/gcc CXX=/usr/bin/g++ cmake .. -DCMAKE_BUILD_TYPE=Release

cmake --build . -j도커 실행하기 커맨드

xhost +local:docker

docker run -it --rm \

--gpus all \

--net=host \

--ipc=host \

-e DISPLAY=$DISPLAY \

-v /tmp/.X11-unix:/tmp/.X11-unix \

-v /home/ubuntuurl:/home/ubuntuurl \

nvblox_deps:latest \

bash예제

nvblox는 킹받게 1) depth 이미지 2) 카메라 pose (월드 좌표계 기준) 3) 카메라 파라미터가 필요하다.

rosbag에서 해당 데이터를 추출해준다. 데이터는 Realsense D455로 땄다.

/camera/color/image_raw

/camera/color/camera_info

/camera/aligned_depth_to_color/image_raw

/camera/aligned_depth_to_color/camera_info

/clock

/tfhttps://github.com/ethz-asl/nvblox_ros1 이걸 클론해서 도커 세팅을 하는데 컴파일할 때 문제가 좀 난다. CMake 버전을 바꾸고

GitHub - ethz-asl/nvblox_ros1: ROS1 wrappers for GPU-acceleration volumetric mapping with nvblox.

ROS1 wrappers for GPU-acceleration volumetric mapping with nvblox. - ethz-asl/nvblox_ros1

github.com

root@ubuntuurl-DX5-Black-Intel:~/nvblox_ws/src/nvblox_ros1/nvblox_ros# 여기의 CMakeLists.txt를 아래와 같이 수정하면 컴파일이 된다.

# SPDX-FileCopyrightText: NVIDIA CORPORATION & AFFILIATES

# Copyright (c) 2021-2022 NVIDIA CORPORATION & AFFILIATES. All rights reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# SPDX-License-Identifier: Apache-2.0

cmake_minimum_required(VERSION 3.16...3.20)

include(ExternalProject)

project(nvblox_ros LANGUAGES CXX CUDA)

#add_compile_options(-Wall -Wextra -O3)

#add_compile_options($<$<COMPILE_LANGUAGE:CXX>:-Wall -Wextra>)

add_compile_options(

$<$<COMPILE_LANGUAGE:CXX>:-Wall>

$<$<COMPILE_LANGUAGE:CXX>:-Wextra>

$<$<COMPILE_LANGUAGE:CXX>:-Wpedantic>

)

if(CMAKE_COMPILER_IS_GNUCXX OR CMAKE_CXX_COMPILER_ID MATCHES "Clang")

set(CMAKE_EXPORT_COMPILE_COMMANDS ON)

endif()

set(CMAKE_CXX_STANDARD 17)

set(CMAKE_CXX_STANDARD_REQUIRED ON)

# Default to release build

if(NOT CMAKE_BUILD_TYPE OR CMAKE_BUILD_TYPE STREQUAL "")

set(CMAKE_BUILD_TYPE "Release" CACHE STRING "" FORCE)

endif()

message( STATUS "CMAKE_BUILD_TYPE: ${CMAKE_BUILD_TYPE}" )

################

# DEPENDENCIES #

################

find_package(catkin REQUIRED COMPONENTS

roscpp

std_msgs

std_srvs

sensor_msgs

geometry_msgs

visualization_msgs

tf2_ros

cv_bridge

message_filters

nvblox_msgs

)

find_package(CUDA REQUIRED)

###################################

## catkin specific configuration ##

###################################

## The catkin_package macro generates cmake config files for your package

## Declare things to be passed to dependent projects

## INCLUDE_DIRS: uncomment this if you package contains header files

## LIBRARIES: libraries you create in this project that dependent projects also need

## CATKIN_DEPENDS: catkin_packages dependent projects also need

## DEPENDS: system dependencies of this project that dependent projects also need

catkin_package(

INCLUDE_DIRS

include

LIBRARIES

${PROJECT_NAME}_lib

CATKIN_DEPENDS

roscpp

std_msgs

std_srvs

sensor_msgs

geometry_msgs

visualization_msgs

tf2_ros

cv_bridge

message_filters

nvblox_msgs

)

# Set up correct include directories

include_directories(AFTER include ${catkin_INCLUDE_DIRS})

include_directories(

${catkin_INCLUDE_DIRS}

${nvblox_INCLUDE_DIRS}

)

########

# CUDA #

########

set(CMAKE_CUDA_FLAGS "${CMAKE_CUDA_FLAGS} --expt-relaxed-constexpr -Xcudafe --display_error_number --disable-warnings ")

set(CUDA_NVCC_FLAGS "${CUDA_NVCC_FLAGS} --compiler-options -fPIC")

include_directories("${CMAKE_CUDA_TOOLKIT_INCLUDE_DIRECTORIES}")

##########

# NVBLOX #

##########

message(STATUS "Installing nvblox.")

include(cmake/nvblox.cmake)

find_package(nvblox QUIET)

# Trick to re-run cmake.

if(NOT ${nvblox_FOUND})

# Force a rerun to re-scan for nvblox so that we basically run cmake twice at once.

# Yo dawg...

message("Rescanning for nvblox")

add_custom_target(rescan_for_nvblox ${CMAKE_COMMAND} ${CMAKE_SOURCE_DIR} DEPENDS nvblox_interface)

else()

#Rescan becomes a dummy target after first build

#this prevents cmake from rebuilding cache/projects on subsequent builds

message("nvblox already found!")

add_custom_target(rescan_for_nvblox)

endif()

#############

# LIBRARIES #

#############

add_library(${PROJECT_NAME}_lib SHARED

src/lib/conversions/image_conversions.cu

src/lib/conversions/layer_conversions.cu

src/lib/conversions/mesh_conversions.cpp

src/lib/conversions/pointcloud_conversions.cu

src/lib/conversions/esdf_slice_conversions.cu

src/lib/visualization.cpp

src/lib/transformer.cpp

src/lib/mapper_initialization.cpp

src/lib/nvblox_node.cpp

src/lib/nvblox_human_node.cpp

)

add_dependencies(${PROJECT_NAME}_lib

${catkin_EXPORTED_TARGETS}

nvblox_interface

rescan_for_nvblox

)

if (${nvblox_FOUND})

target_link_libraries(${PROJECT_NAME}_lib

nvblox::nvblox_lib

nvblox::nvblox_eigen

${catkin_LIBRARIES})

get_target_property(CUDA_ARCHS nvblox::nvblox_lib CUDA_ARCHITECTURES)

set_property(TARGET ${PROJECT_NAME}_lib APPEND PROPERTY CUDA_ARCHITECTURES ${CUDA_ARCHS})

target_include_directories(${PROJECT_NAME}_lib PUBLIC

$<BUILD_INTERFACE:${CMAKE_CURRENT_SOURCE_DIR}/include>

$<INSTALL_INTERFACE:include>

${catkin_INCLUDE_DIRS})

target_include_directories(${PROJECT_NAME}_lib SYSTEM BEFORE PUBLIC

$<TARGET_PROPERTY:nvblox::nvblox_eigen,INTERFACE_INCLUDE_DIRECTORIES>)

else()

message(WARNING "No nvblox found!")

endif()

############

# BINARIES #

############

add_executable(nvblox_node

src/nvblox_node_main.cpp

)

target_link_libraries(nvblox_node ${PROJECT_NAME}_lib)

add_dependencies(nvblox_node

${catkin_EXPORTED_TARGETS}

)

add_executable(nvblox_human_node

src/nvblox_human_node_main.cpp

)

target_link_libraries(nvblox_human_node ${PROJECT_NAME}_lib)

add_dependencies(nvblox_human_node

${catkin_EXPORTED_TARGETS}

)

###########

# INSTALL #

###########

install(

DIRECTORY include/${PROJECT_NAME}/

DESTINATION ${CATKIN_PACKAGE_INCLUDE_DESTINATION})

install(

TARGETS ${PROJECT_NAME}_lib nvblox_interface

EXPORT ${PROJECT_NAME}

ARCHIVE DESTINATION ${CATKIN_PACKAGE_LIB_DESTINATION}/${PROJECT_NAME}

LIBRARY DESTINATION ${CATKIN_PACKAGE_LIB_DESTINATION}/${PROJECT_NAME}

RUNTIME DESTINATION ${CATKIN_PACKAGE_BIN_DESTINATION}

INCLUDES DESTINATION include/${PROJECT_NAME}/

)

enable_language(CUDA)

set(CMAKE_CUDA_STANDARD 17)

set(CMAKE_CUDA_STANDARD_REQUIRED ON)

set(CMAKE_CUDA_FLAGS "${CMAKE_CUDA_FLAGS} --expt-relaxed-constexpr --expt-extended-lambda")

set(CMAKE_CUDA_ARCHITECTURES all)

target_link_libraries(nvblox_ros_lib

${catkin_LIBRARIES}

${nvblox_LIBRARIES}

${CUDA_CUDART_LIBRARY}

)

target_link_libraries(nvblox_node

nvblox_ros_lib

${nvblox_LIBRARIES}

${CUDA_CUDART_LIBRARY}

)

target_link_libraries(nvblox_human_node

nvblox_ros_lib

${nvblox_LIBRARIES}

${CUDA_CUDART_LIBRARY}

)만약 Launch를 했는데

[INFO] [1767449995.425770249]: Getting parameters from parameter server.

[INFO] [1767449995.426572945]: static_projective_layer_type: TSDF (for occupancy set the use_static_occupancy_layer parameter)

CUDA error = 35 at /root/nvblox_ws/src/nvblox_ros1/nvblox/nvblox/include/nvblox/core/internal/impl/unified_ptr_impl.h:48 'cudaMallocHost(&cuda_ptr, sizeof(T))'. Error string: CUDA driver version is insufficient for CUDA runtime version.

[nvblox_node-1] process has died [pid 27337, exit code 99, cmd /root/nvblox_ws/devel/.private/nvblox_ros/lib/nvblox_ros/nvblox_node depth/image:=/data/depth_image depth/camera_info:=/data/depth_image/camera_info color/image:=/data/color_image color/camera_info:=/data/color_image/camera_info __name:=nvblox_node __log:=/root/.ros/log/4a06a08e-e8a5-11f0-8d97-918ba1481507/nvblox_node-1.log].

log file: /root/.ros/log/4a06a08e-e8a5-11f0-8d97-918ba1481507/nvblox_node-1*.log

all processes on machine have died, roslaunch will exit

shutting down processing monitor...

... shutting down processing monitor complete

done이런 에러가 뜬다면 Run_docker.sh를 수정해준다.

docker run -it --rm \

--gpus all \

--runtime=nvidia \

-e NVIDIA_VISIBLE_DEVICES=all \

-e NVIDIA_DRIVER_CAPABILITIES=compute,utility \

--env="DISPLAY=$DISPLAY" \

--env="FRANKA_IP=$FRANKA_IP" \

--volume=$WORKSPACE:/root/nvblox_ws \

--volume=/home/$USER/data:/root/data \

--volume="/tmp/.X11-unix:/tmp/.X11-unix:rw" \

--env="XAUTHORITY=$XAUTH" \

--volume="$XAUTH:$XAUTH" \

--net=host \

--privileged \

--name=$NAME \

${DOCKER} \

bashRTAB-map으로 odom 따기

nvblox는 world to camera 좌표계를 필요로 한다. 제일 쉽게 쓸 수 있는 rtabmap으로 odom을 딴다.

# bag일 경우

rosparam set /use_sim_time true

rosbag play nvblox_comp_20260103_220009.bag --clock --pause

roslaunch rtabmap_ros rtabmap.launch \

rgb_topic:=/camera/color/image_raw \

depth_topic:=/camera/aligned_depth_to_color/image_raw \

camera_info_topic:=/camera/color/camera_info \

frame_id:=camera_link \

odom_frame_id:=odom \

approx_sync:=true \

rtabmapviz:=false

roslaunch nvblox_ros nvblox_ros_panopt.launch \

rviz:=false \

global_frame:=odom \

is_realsense_data:=true \

pose_frame:=camera_color_optical_frame \

map_clearing_frame_id:=camera_link

IMU 꼭 함께 레코드해줄것..

# Terminal 1

rosparam set /use_sim_time true

rosbag play nvblox_comp_20260104_193237.bag --clock --pause

# Terminal 2 - IMU 필터

rosrun imu_filter_madgwick imu_filter_node \

_use_mag:=false \

_publish_tf:=false \

_world_frame:=enu \

/imu/data_raw:=/camera/imu \

/imu/data:=/imu/data_filtered

# Terminal 3 - rtabmap (필터링된 IMU 사용)

roslaunch rtabmap_ros rtabmap.launch \

rgb_topic:=/camera/color/image_raw \

depth_topic:=/camera/aligned_depth_to_color/image_raw \

camera_info_topic:=/camera/color/camera_info \

frame_id:=camera_link \

odom_frame_id:=odom \

approx_sync:=true \

rtabmapviz:=false \

imu_topic:=/imu/data_filtered \

wait_imu_to_init:=true

# Terminal 4 - odom to pose relay

python ~/odom_to_pose_relay.py

# Terminal 5 - nvblox

roslaunch nvblox_ros nvblox_ros_panopt.launch \

rviz:=false \

global_frame:=odom \

is_realsense_data:=true \

pose_frame:=camera_link \

map_clearing_frame_id:=camera_link

생각보다 GPU를 많이 안 쓴다. 500MB 정도 ?

+ odom_pose_relay.py

#!/usr/bin/env python

import rospy

from nav_msgs.msg import Odometry

from geometry_msgs.msg import PoseStamped

def callback(msg):

pose = PoseStamped()

pose.header = msg.header

pose.pose = msg.pose.pose

pub.publish(pose)

rospy.init_node('odom_to_pose_relay')

pub = rospy.Publisher('/pose', PoseStamped, queue_size=10)

rospy.Subscriber('/rtabmap/odom', Odometry, callback)

rospy.spin()

+ 처음에는 COLMAP으로 W2C 하려고 했으나 귀찮아서 RTABMAP돌림..

export QT_QPA_PLATFORM=offscreen

WS=/home/*/data/colmap_ws

IMG=/home/*/data/export/color

DB=$WS/database.db

SPARSE=$WS/sparse

mkdir -p "$WS" "$SPARSE"

rm -f "$DB"

# 1) Feature extraction

xvfb-run -a colmap feature_extractor \

--database_path "$DB" \

--image_path "$IMG" \

--ImageReader.single_camera 1 \

--ImageReader.camera_model PINHOLE

# 2) Matching (연속 프레임이면 sequential)

xvfb-run -a colmap sequential_matcher \

--database_path "$DB" \

--SequentialMatching.overlap 10

# 3) Sparse reconstruction

xvfb-run -a colmap mapper \

--database_path "$DB" \

--image_path "$IMG" \

--output_path "$SPARSE"

# 결과 확인

ls -lah "$SPARSE"

ls -lah "$SPARSE/0" | head

ls -lah /home/*/data/colmap_ws/sparse/0 | head -n 30

Planning

'Navigation' 카테고리의 다른 글

| SplatNav 코드 뜯어보기 : SFC-1과 SFC-2의 차이점 (0) | 2025.11.06 |

|---|---|

| SplatNav 코드 돌려보기 (2) 내 데이터로 돌려보기 (0) | 2025.11.04 |

| SplatNav : 코드 아키텍쳐 분석 (0) | 2025.11.03 |

| SplatNav 코드 돌려보기 (1) 제공해준 데이터로 돌려보기 (0) | 2025.10.21 |

| Open Motion Planning Library(OMPL)를 사용해 경로 계산하고 시각화하기 (0) | 2025.03.09 |

소중한 공감 감사합니다